As more and more companies and governments make data available online, the demand and need to access and process Internet-based data is growing fast. Problematically, much of the data is available only through a front-end web-app that is designed for ad-hoc queries. If the need is to get bulk amounts of online data, automation becomes essential. Real-world automation requires that any number of methods for extracting data from the Internet be made available to support the desired business process. This is made even more so by the variances of web systems design, e.g. some apps have web services and many do not.

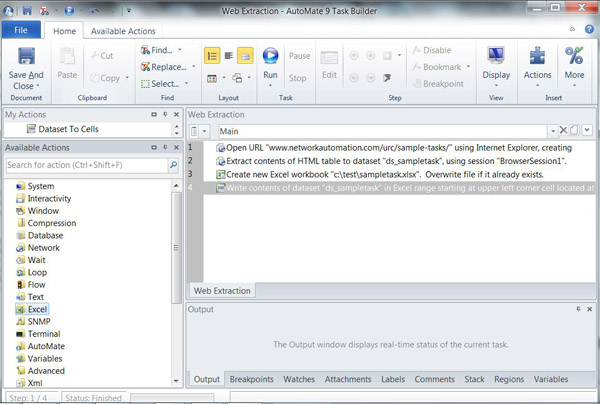

Automate supports the ability to integrate with web services or to navigate through webpages, interact with web data, initiate searches and logins, enter data, click through links, and extract tables and entire webpage source information. It does this through the action library with actions for web browsers, HTTP, XML and web services, all which can be invoked without writing code. For this example, we use Excel automation methods.

This article teaches you to automate basic web scraping and table extraction automation. To start, we utilize two sets of actions, the web browser and the Excel actions, to create the task.

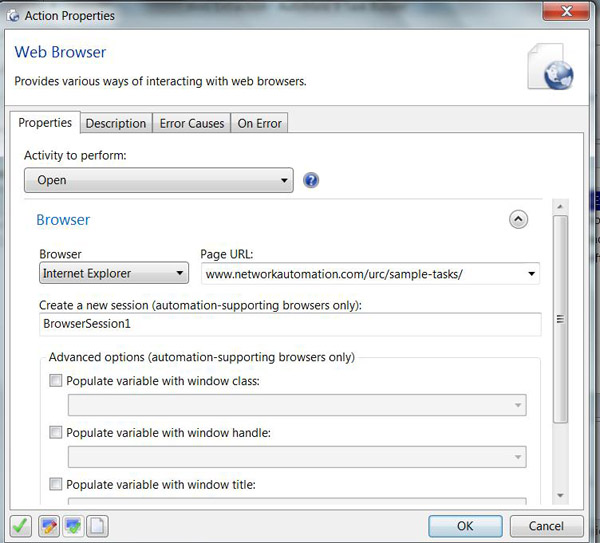

Step 1

In the first step of the task, the “Open” web browser activity opens the website, specified in the Page URL field, to mimic manually opening the Sample Task webpage.

Notice in the image, there is a browser dropdown arrow indicating multiple browser support (such as Internet Explorer, Firefox, Safari and Chrome).

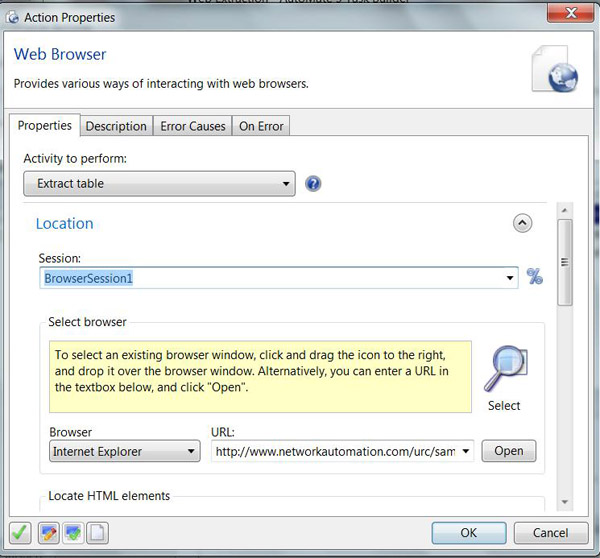

Step 2

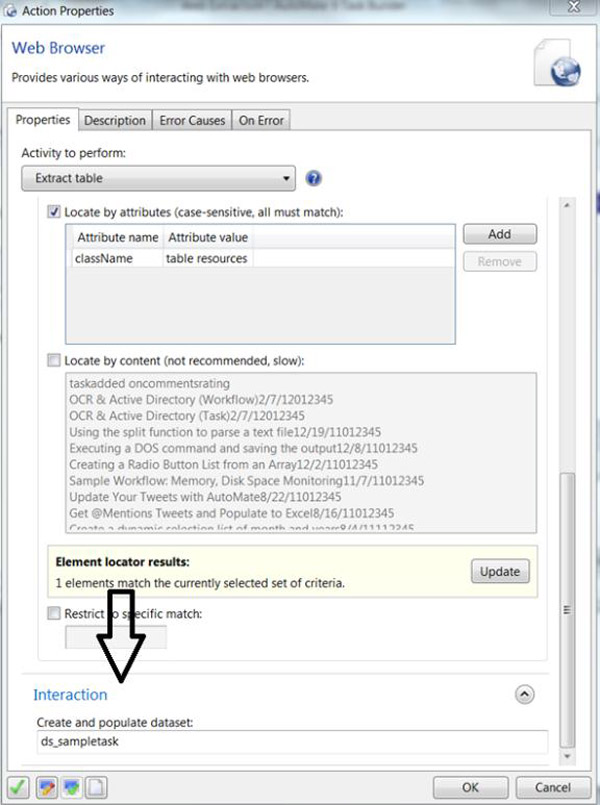

In the second step of the task, we use the “Extract Table” web browser action. This requires selecting a browser, locating the HTML element and creating a dataset to populate the table information for importing into Excel.

After selecting your preferred web browser, use the Magnifying Glass icon to drag it to the opened webpage. You can also manually enter the webpage link into the URL area under "Select browser."

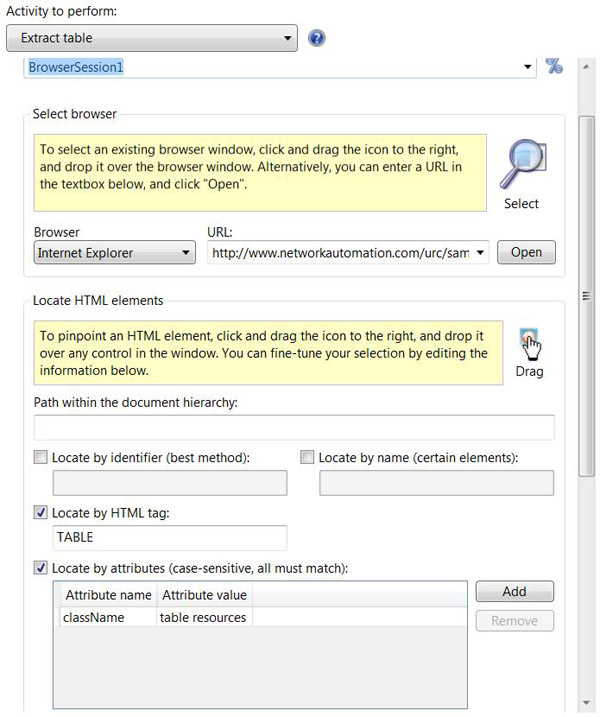

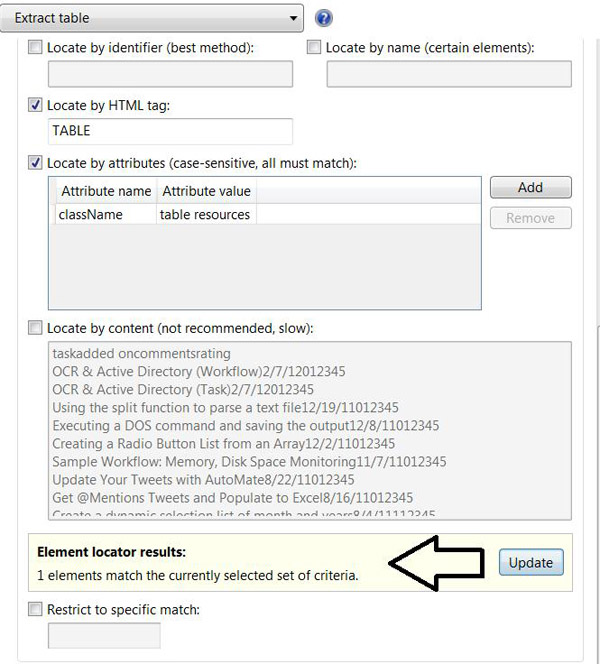

Locating the HTML Elements requires using the Hand icon to point to the website containing the table for extraction. Drag the hand to the table to select the HTML components. The controls and components collected from the HTML elements always identify the correct table. Notice how the “Locate by HTML tag” and “Locate by attributes (case sensitive, all must match)” identifies the HTML location and discovery as shown in the image below.

When locating HTML elements, Automate matches the locator element results with the criteria specified.

Creating and populating the dataset under the Interaction area allows us to place a value on each of the columns in the table for integration with other applications. In this example, we will be using Excel.

Step 3

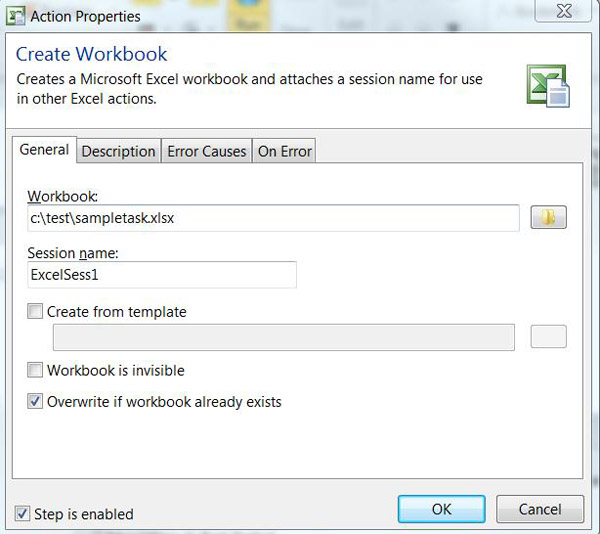

In the third step of the task, we use a “Create an Excel Workbook” action to create an Excel spreadsheet and establish an Excel session for interaction. The only requirement with this action is to specify the path and a new spreadsheet name, such as C:\test\sampletask.xlsx.

Step 4

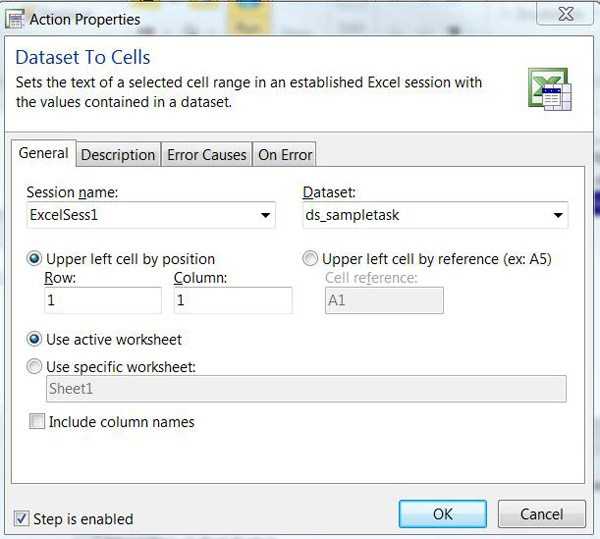

The last step in the task is the “Dataset to Cells” action. This is used to set the text of the selected cell range in the established Excel session with the values contained in the dataset created in the Web Browser Extract Table (Step 2). Starting at Row 1, Column 1, the entire table is placed into the newly created Excel spreadsheet starting at Row A1. And that’s it!

Get Started

See for yourself how Automate can help your organization with Data Scraping and Extraction. Start a free trial today!