My introduction to IT (or data processing as it was called in the 1980s) was as a trainee operator for an insurance company working on an IBM mainframe where the machine filled an area as big as a soccer pitch, you developed long muscular arms from carrying magnetic tapes, and IBM customer engineers had their own permanent desk in the office. The whole environment was mind-boggling for an 18-year-old that had only two years of college and some brief work experience behind him, most of which involved paper tape and punched card.

What struck me was how many people were employed in IT. In my company alone there were about 300, including managers, developers, technical support, printer operators, tape monkeys, and a plethora of people looking constantly tired and working a shift pattern that only they could understand. But what on earth did everyone do?

It took a good six months before I managed to pinpoint roles to the various teams in the company and decided the direction I wanted to steer my career. I wanted to know what happened to all those printouts. What did those odd graphs and tables represent? What did all that chin-scratching mean when users complained about slow systems?

As I earned my stripes by working a lot of shifts late into the night, I ate a lot of pizza at strange hours and gradually progressed through the ranks. I also learned a lot about managing systems, particularly about the art of performance management.

Mastering Performance (and Pizza)

Having served my apprenticeship, I eventually secured a support role and was tasked with looking after disk space occupancy and system response times. Having a very analytical mind and a penchant for statistics, I was in my element. I had tape monkeys loading tapes for me, shift operators running my jobs, and print operators delivering reams of paper to my desk on a regular basis.

We had a number of user departments in the company including Finance, Policy Administration, Customer Services, and Actuaries. My key responsibilities were to:

- Measure how much disk space each department had consumed in the past month

- Measure the system response times experienced by the end users

- Compare figures to last month and last year

- Report on my findings and provide recommendations

My work, especially the reporting element, became more and more important as demands on expensive system resources increased. Our business went from predominantly 9:00 a.m. to 5:00 p.m. Monday through Friday to 8:00 a.m. to 8:00 p.m. six days a week, which quickly progressed to seven days a week. In my work, there was no room for error. My reports were discussed at senior management level and board meetings, so I had to be at the top of my game 100 percent of the time.

A notable trend at that time was for the IT department to justify what it did for the business. It was no longer adequate for us to simply manage the hardware, software, network, and infrastructure; we had to prove we were managing it. Again, my reporting helped enormously, and I saw my handiwork displayed on company noticeboards and newsletters throughout the organization.

I spent many an evening pulling my hair out trying to ensure that the figures made sense, wading through the ever-increasing amount of paperwork that arrived on my desk—not only from the mainframe, but as technology moved on, from midrange, open systems, and also Windows servers. I became busier and busier until after too many late nights and pizzas I had an assistant join me. What bliss.

My “team” continued to follow my tried and trusted process and we fast become a vital cog not only within IT, but in the company as a whole. We had input into budget proposals for new hardware and influenced how many extra staff could be taken on by different departments without bringing the system to a halt over the next quarter. In fact, during my time in this particular role, my team successfully justified a budgetary spend of $1.5M on IT infrastructure as a result of our reporting.

I left that position some time ago now, although a substantial part of my current role involves travelling to customer data centers across the world to talk about system performance, the challenges they face, and how I can make their life easier. Frequently, I revisit my performance management mantra, which I had refined over the years and broken down into three essential steps. I find that it’s still as relevant today as it has always been.

The Performance Management Mantra

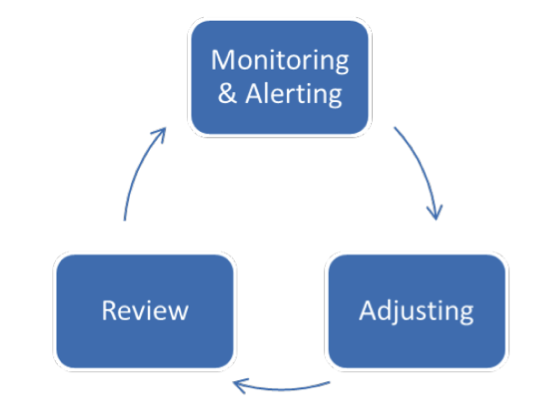

In my opinion, there are three steps to successful performance management: monitoring and alerting, adjusting, and review. Each step provides critical information that feeds into the next.

Performance management cycle

Step 1: Monitoring and Alerting

This is your starting point. I find it’s best to think of it as a two-pronged attack, first the monitoring aspect and then alerting.

Elements of Monitoring

Speak to people in the business.

Consult as many departments as possible to become familiar with their plans for their department in terms of staff increases or reductions over the coming period.

At one of my previous positions, my predecessor discovered one day that the call center had hired an additional 80 agents almost overnight, a recruitment process to which he’d been oblivious. As you’d expect, this had serious ramifications on the central host machine, which he looked after.

The end result was an out-of-budget spend of $80,000 on a processor upgrade just to ensure the system would be able to cope with the new demands during peak periods. All of this could have been avoided (and properly budgeted for) if he’d spoken to the business in advance.

Find out peak throughput.

In other words, know when the business at its busiest. Depending on the business you are supporting, this may be linked to particular times of the year, specific days of the month, or even hours of the day.

Many IT folks have natural tendencies to work in isolation and find it difficult to think about the bigger picture, but it’s important to ask questions like, when does the business require additional machine resources? What is the impact if the system is slow or even unavailable for a period of time? Not only does this provide you with valuable information, but it also clearly demonstrates to the business that IT—who you are representing—cares about what they do and what they are trying to achieve.

Establish the baseline.

Once you have established when and where the demands for system resources are, you can establish a known constant or baseline. Your baseline will provide a clearly defined starting point in terms of figures and time from which you can judge improvements or make comparisons with previous results during the adjustment part of the process.

Elements of Alerting

Define thresholds.

From the baseline you’ve established, along with your newly acquired knowledge of the business, you now know the resource requirements on a department basis throughout the day, week, month, and year. Using this information, you can define thresholds for elements of the system for which you want to be alerted should the thresholds be breached. These thresholds, depending on platform, are normally definable—albeit at a rather basic level—as part of the tools that come with the operating system.

Manage by exception.

You may be fortunate enough to have the kind of systems management software in place that allows advanced, flexible, rule-based criteria. I can strongly recommend deploying these kinds of monitoring solutions in order to achieve a major objective that system administrators of busy systems should really strive for: managing by exception.

The aim is to reduce (or completely eliminate) the need to manually monitor the system and instead receive alerts in a format that works for you regardless of where you are. For example, notification could come by email, SMS, or a visible or audible alert on your preferred mobile device.

Whichever method or combination of methods you choose, you must be instantly notified if a pre-defined threshold is breached. These breaches are classed as exceptions. If they occur, the alert will effectively tap you on the shoulder—even when you are away from your desk—so you’re likely to find more time to concentrate on higher priority projects rather than troubleshooting issues.

Step 2: Adjusting

This is the most exciting step as you can impact the experience of the end user, in some cases with a couple of key strokes. What the end user doesn’t obviously realize (or care about) is all the background work and number crunching you’ve undertaken that allows you to make these changes. There’s a great sense of achievement in having a happy set of users.

Based on your monitoring and alerting findings, you’re now in a position of power where you know exactly what resources you have at your disposal and what the demands on those resources are likely to be. Teamed with alerting triggers that will tell you when there is an impending issue or bottleneck that requires attention, you now have the ammunition required to make changes to system parameters and settings that will have a positive impact on performance.

Elements of Adjusting

Record every adjustment.

Always record all of your adjustments, both the before and after settings. This enables you to revert back should the changes not have the desired effect.

Change one thing at a time.

If you only ever change one thing at a time, you will be able to determine which change has had a positive or negative result.

Both of these will feed into your graphs and tables as part of the review part of the process.

Step 3: Review

This step is often neglected, but it’s the most important of the three steps. Admittedly, it can be very time-consuming due to the necessary analysis on the statistics gathered and the adjustments made since the last review, but how else do you know whether you got things right?

The review part of the process takes the form of a document that may be used by a number of different people, so it must provide a clear and concise message. This is an opportunity for you to sing your own praises. In essence, the resulting documentation will be confirmation that you’ve done your job properly and everything is running as expected, providing you’ve followed steps one and two properly.

Elements of a Review

Include baseline settings.

You will need to illustrate how you arrived at your baseline of settings that make up each system. This is a result of previous reviews conducted and following discussions with the business. It’s quite acceptable to extract specific quotes from people you have consulted in the business to add validity. This section can contain as much detail as you see fit.

Describe what monitoring and alerting processes you have put in place and why.

For example, you might explain something like this:

As disk space exceeded 70 percent, I opted to monitor the 80 percent and 90 percent thresholds with increasing levels of alerting to ensure sufficient disk space remains available for the period.

- >80% Disk Space Used – Email the support team.

- >90% Disk Space Used – Send a SMS to the support team. If not responded to within 30 minutes, automatically clear down the test environment and escalate to the technical services manager or IT operations manager.

State the adjustments you’ve made.

From the baseline, provide details of what changes you have made to the system, both before and after settings, plus the results of those changes. These results should be backed up by statistics and figures extracted where applicable.

The adjustments should also form part of your new baseline and you should include a section detailing why the adjustments were made. Maybe they were the result of an outage or a bottleneck experienced by users or maybe they were planned changes as a result of your meetings with the business.

Illustrate key elements.

You should also include a section that illustrates, in both tabular and graphical forms, the key elements that make up the system. This should always include core elements (CPU, disk, and memory) as a minimum and should spilt these elements down into usage on a one-minute, 15-minute, hourly, daily, monthly, annual, and on-going basis.

I find that senior management responds well to graphical representation, especially when it can be backed up with figures and split down into areas such as departments, business units, or customers.

Provide your recommendations.

This section should highlight any significant changes (hardware, software, or infrastructure) that you believe would have a positive impact on system performance and the business. These recommendations should be clearly laid out, stating what they are, why you are recommending they should be done, when they should be scheduled, and what the associated costs are for implementation, including manpower.

Each recommendation should also draw on earlier sections in the review document to underline its importance. You should also highlight possible scenarios if the recommendation is ignored and not carried out. For example, overall response times may suffer or the support teams may spend an extra three hours per week firefighting to keep disk space utilization to acceptable levels.

Performance Management Sounds Like Hard Work

You’re right. It’s a lot of hard work, especially at first when you’re starting from nothing and probably already have more than enough to do on a daily basis. But fear not, help is at hand!

There are some fantastic tools available now to help you set up and automate these processes. In my experience, the enterprise-wide solutions that promise the world end up delivering very little due to their complexities and over-reliance on forms of scripting that are usually over-complicated and time-consuming to maintain.

You need to choose a tool that makes your job easier by simplifying or even automating some of the key manual processes, including the setup and on-going support of your performance management process. Some options for modern performance management tools can be found here.

By following the three steps outlined in this article, you’ll benefit from economies of scale as the hard work is done up front, allowing you to reap the efficiencies the more systems you’re asked to manage.

Learn More about Monitoring Your IBM i

Stay a step ahead of end users and reduce the cost and amount of downtime by spotting and solving system, application, and network issues fast. Explore some of the effective, modern performance management tools available from Fortra.