Many IT professionals consider database transaction logs mysterious entities that do little more than take up valuable server disk space.

A full transaction log, or a log taking up a lot of disk space, leads to using quick and dirty solutions such as clearing the transaction log. This is rarely the correct way to solve the problem and can actually lead to potential data disaster. It’s all a fun little circle of misunderstanding.

Getting to know basic transaction log concepts will help you dispel some of the confusion and start effectively managing them.

What Database Transaction Logs Do

The transaction log keeps a history of changes to data within a database. If the database encounters issues, SQL Server can recover and maintain database integrity. It does not keep a log of the actual SQL statements that are executed. The transaction log only contains information related to how the actual data itself changed, so taking the quick and dirty option mentioned above removes the ability for the database to recover if something goes wrong.

How Logs Work

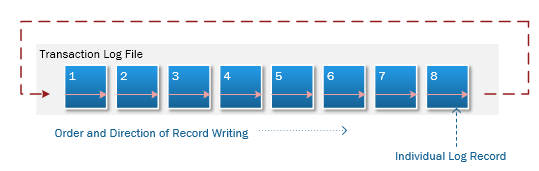

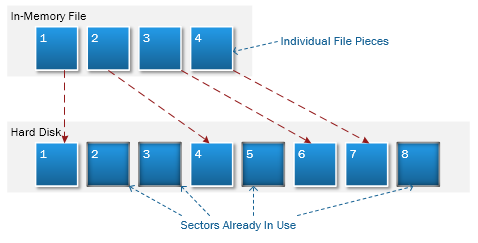

Log records are written sequentially within the log file, unlike how files are written to a hard drive.

When SQL Server gets to the end of the transaction log, it goes back to the beginning of the log and starts over. This concept becomes important later when discussing backups and managing the size of the transaction log.

Figure 1: Writing Records to a Transaction Log

Figure 2: Writing File Pieces to Disk

It’s also worth noting that log records for a transaction are actually written before data is saved to the physical database file. This is called Write-ahead Logging and allows SQL Server to recover and reconcile data if a problem occurs.

For example, if a database crashes before data changes are written to disk, SQL Server will compare the data against the logs. If the data doesn’t match the logs, then the server can execute the unfinished transactions again. If log records were written after the data changed, recovering from this scenario would be impossible. Data integrity could not be guaranteed.

The Problem with Transaction Logs

Transaction logs can grow out of control when not properly maintained.

Every time data is changed in the database, records get added to the transaction log.

If a transaction is run against a very large table, the transaction log must record and store all of those data changes concurrently until the transaction is completed. Since the log writes its data to disk, this can eat up a lot of disk space very quickly.

Solutions to Simplify Managing Them

So, how do you maintain a transaction log that provides data security but also doesn’t eat up all of a server’s disk space? Here are some basic steps to get started.

Recovery Models

First, choose the right database recovery model.

There are three that can be used with a SQL Server database but the most commonly used models are SIMPLE and FULL. The third option, BULK_LOGGED, is typically used only temporarily for large operations where performance is critical. The sacrifice to get this performance boost is potential data loss if something goes wrong.

SQL Server defaults to a FULL recovery model when creating new databases and is the best model to use for mission-critical databases. The general differences between SIMPLE and FULL models are detailed below.

| Simple | Full |

|

|

If the database contains mission-critical data that cannot be lost or have discrepancies, then use the FULL model.

However, the SIMPLE recovery model can be used if the data is less critical where it is acceptable to simply recover from the previous night’s backups. Using the SIMPLE model will save you a lot of disk space, but at the cost of data safety.

Need help with your recovery model? Get the guide >

Growth Limits

By default, SQL Server sets the transaction log to no maximum size.

Leaving this unchanged allows the possibility that the log could completely fill your drive’s free space.

Setting a maximum size prevents SQL Server from running amok with your drive. When the log writer gets to the end of the log file, it simply goes back to the very beginning of the log and overwrites old entries.

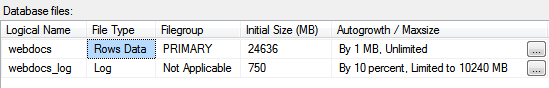

Figure 3 – Database File Maximum Sizes

Setting an appropriate maximum size is very important to help you avoid errors where the transaction log is full of active records. This can happen during large operations, such as rebuilding a large index. That's because it must be completed in a single transaction and contains a lot of data.

One way of estimating an appropriate maximum transaction log size is to set it to at least the size of the largest index in the table.

Since rebuilding the index requires at least the same amount of space in the transaction log as the index itself, the transaction log will always have enough space to complete the operation.

In Figure 3 the transaction log’s maximum size is set to 10240MB. The largest index in that database is roughly 2100MB, so there is plenty of space in the transaction log.

Need help with your disk space? Get the guide >

Backup, Backup, Backup

Finally, take consistent and automated backups. There are many ways of setting this up (and is beyond the scope of this post).

If the recovery model is set to SIMPLE, the only option is to perform backups of the data; backing up the transaction log is not possible. Each backup will be a single snapshot of the data in the database at the time of the backup.

If the recovery model is set to FULL, then transaction log backups allow the database to be fully restored to a particular point in time and aren’t constrained to only one specific moment in time.

Log backups also perform the important task of marking existing log records as inactive. Inactive log record space can then be reused by the transaction log when it comes back to that section of the log file since it writes to the log in a sequential order.

It’s also important to note that performing a “full backup” from the backup options does not back-up the transaction log, even when the database is in the FULL recovery model.

Need Help Managing Your Database Transaction Logs?

Solve your disk space and data backup problems with the right strategy.